The Problem Most Organizations Have

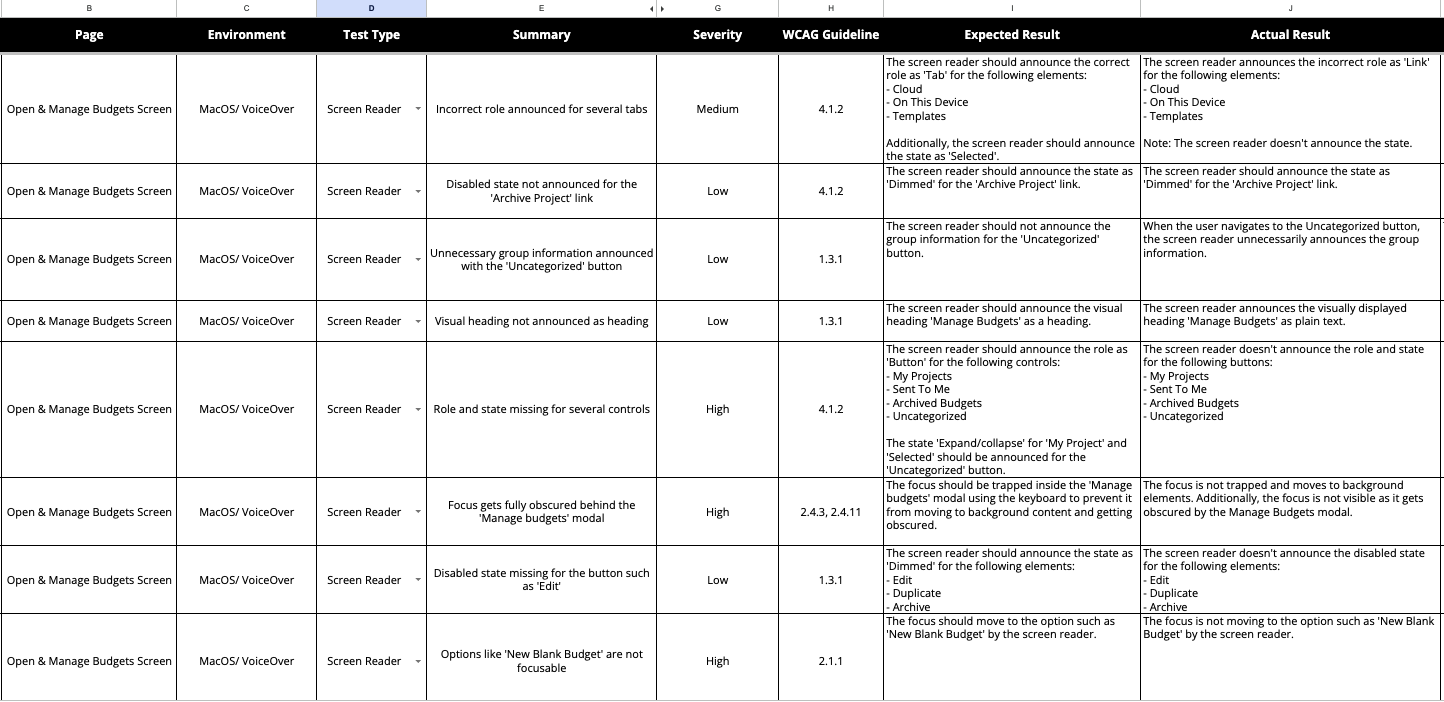

Accessibility work at most organizations is reactive. A legal threat arrives, or a procurement requirement surfaces, and someone commissions an audit. The audit produces a spreadsheet of WCAG violations. The spreadsheet gets triaged. Some items get fixed. The spreadsheet goes into a drawer. Two years later the cycle repeats, and most of the same problems are still there.

I've spent more than fifteen years learning why that pattern persists and building an alternative to it. The short version: accessibility is an organizational capability, not an audit deliverable. One-off evaluations produce reports. Programs produce teams that ship accessible products. The difference is whether accessibility knowledge lives in a document or in the people who design and build the software.

What follows is not a single case study. It's the story of how I developed a practice - a repeatable approach to accessibility evaluation, program design, and organizational change - and applied it across six organizations over fifteen years.

Standing Up a Program from Scratch

Turning Technologies, 2010

My first serious accessibility engagement was also the most formative. Turning Technologies made audience response systems: hardware clickers and desktop software used in classrooms and conference settings. Federal agencies were a key market, which meant Section 508 compliance wasn't optional. But the company had no accessibility infrastructure: no standards, no evaluation process, no documentation, no training, and no one whose job it was to make any of that happen.

I became that person. As program driver, I built a cross-discipline team that included product management, R&D, QA, marketing, web development, and training. The scope covered everything the company shipped: hardware devices, desktop software for PC and Mac, and the public-facing website.

The program had five workstreams running in parallel: evaluation training so the team could assess products themselves, product-by-product assessments against Section 508, remediation planning with engineering, VPAT documentation for procurement, and the creation of internal accessibility design standards. We also built a public accessibility area on the company website, which served as a signal to Federal buyers that accessibility was an organizational commitment, not a checkbox.

The evaluation methodology I developed at Turning - keyboard navigation, screen magnification, and screen reader testing - became the foundation for every accessibility engagement that followed.

The formative lesson was simple and hard: the technical evaluation was the easy part. The real work was building the organizational infrastructure that made accessibility sustainable. Training people, establishing standards, creating documentation workflows, getting buy-in from engineering leads who had competing priorities. Without that infrastructure, even well-intentioned teams drift back to inaccessible defaults within a release cycle or two.

Developing the Three-Method Approach

Golden 1 Credit Union, 2019

Nine years after Turning Technologies, Golden 1 Credit Union hired me to conduct their first-ever accessibility review. The context was different - Golden 1 is a consumer-facing financial services website rather than enterprise hardware - but the underlying challenge was the same. No one at the organization had systematically evaluated the site's accessibility. They didn't know what they didn't know.

This engagement is where I formalized the three-method evaluation approach that became my standard practice:

Automated code inspection scans pages against WCAG success criteria programmatically. It catches the low-hanging fruit - missing alt text, insufficient color contrast, malformed ARIA attributes - quickly and at scale. I used an accessibility audit tool to scan ~70 pages of golden1.com. The automated scan surfaced issues at Level A, Level AA, and Level AAA across the site.

Expert walkthrough catches what the scanner can't. I navigated the site using only a keyboard, then again using a screen reader, evaluating interaction patterns, focus management, content structure, and semantic markup. This is where structural problems surface: tab traps, illogical focus order, controls that work with a mouse but disappear under keyboard navigation.

Task-based testing with participants with disabilities catches what neither of the first two methods can. Twelve participants - people with visual disabilities using screen readers and people with mobility disabilities using keyboard-only navigation - attempted core tasks on the site. Their experience revealed barriers that no automated tool or expert review would have flagged, because the barriers lived in the gap between what the interface technically supported and what a person could actually accomplish.

The findings were instructive. The site did not meet the minimum Level A threshold of accessibility as defined by the WCAG. But the issues were largely structural rather than architectural: missing alt text, unlabeled form controls, heading hierarchy problems. Most were categorized as easy to remediate. Task completion among participants with disabilities was high. The bones were good. But the details needed work.

The deeper finding was methodological. Each of the three methods caught problems the other two missed. Automated scanning found issues that would have taken days or weeks to find manually. The expert walkthrough found interaction-level problems - a menu trap, inconsistent focus behavior - that scanners can't easily detect. And participant testing surfaced usability barriers that were invisible to both: a branch locator that technically met WCAG criteria but that user employing a screen reader couldn't navigate efficiently enough to actually use.

Embedding Accessibility Upstream

LogicMonitor, 2021

The Golden 1 engagement was a site-level audit: evaluate what's been built, report what's broken, recommend fixes. The LogicMonitor engagement shifted the intervention upstream.

LogicMonitor was finalizing a new design system, which is the component library that every product team would use to build interfaces. The question wasn't whether the finished product was accessible. The question was whether the building blocks themselves were accessible, so that everything assembled from them would inherit a baseline of conformance.

I evaluated the design system's components against WCAG criteria, focusing on keyboard operability, semantic structure, ARIA patterns, focus management, and color contrast. The deliverable wasn't a pass/fail report on a website. It was guidance for the design system team: which components had accessibility issues baked in, what the impact would be as those components propagated across the product, and what needed to change before the system was declared stable.

This was a different kind of leverage. Fix a button component once in the design system and every screen that uses it inherits the fix. Ship a button component with a focus management bug and every screen that uses it inherits the bug. The economics of accessibility work change fundamentally when you move the intervention to the component layer.

Teaching the Practice

Kent State University, 2014-2021

While the consulting practice was developing through client engagements, I was also codifying the methodology into something teachable. As leader of the Master of Science in User Experience program at Kent State, I created and taught a course on Accessibility Evaluation and Universal Design.

The course covered WCAG conformance evaluation, assistive technology testing, and the organizational dynamics of accessibility adoption - the same combination of technical and organizational knowledge that my consulting work had shown was necessary. Students learned to run all three evaluation methods and to communicate findings in ways that engineering teams could act on.

Teaching forced rigor. When you have to explain to graduate students why you run three methods instead of one, or why an automated scan alone is insufficient, you have to articulate the reasoning precisely. The course sharpened the practice as much as the practice informed the course.

Measuring Longitudinal Change

Golden 1 Credit Union, 2024

Five years after the initial audit, Golden 1 brought me back. The site had been redesigned. The question was whether the new site met WCAG 2.2 Level AA - a higher bar than the 2019 evaluation, both because the standard had been updated and because the target conformance level was higher.

I ran the same three-method approach, now refined through several more years of practice. Nine participants with visual and mobility disabilities completed task-based testing. The automated scan and expert walkthrough covered the redesigned site architecture.

The good news: the site had improved in meaningful ways. A skip-to-main-content control was present on every page. Tab order was logical. The mega-menu was navigable with keyboard and arrow keys. Font readability and contrast were generally strong. Participants noted that Golden 1 had made more effort toward accessibility than many of its competitors.

The sobering news: the site still did not conform to all of the WCAG Level A guidelines. Missing alt text, unlabeled controls, and structural issues persisted. These were the same categories of problems identified five years earlier. Design-level barriers appeared in the site search, branch locator, account opening flow, credit card comparison tool, and account comparison feature.

This is the data point that crystallized fifteen years of accumulated conviction. A well-resourced organization, with genuine intent to improve, redesigned their entire website over a five-year period - and still didn't meet the minimum conformance threshold. The people involved cared. The investment was real. But the organizational capability to sustain accessible design across a full redesign cycle wasn't in place.

This is why I keep returning to program building as the unit of impact rather than the audit. Audits tell you what's broken. Programs change how teams build, which changes what they ship, which changes what users experience. Without the program layer, the next redesign will produce the next round of the same findings.

Conformance Documentation at Scale

Entertainment Partners, 2025

The most recent application of the practice sits at the compliance end of the spectrum. Entertainment Partners produces Movie Magic Budgeting and Movie Magic Scheduling, which are desktop applications used across the film and television industry. They needed VPAT 2.5 conformance reports for both products, evaluated against WCAG 2.2 Level A and Level AA criteria plus Revised Section 508.

I evaluated both applications using keyboard navigation, screen magnification, and screen reader testing, then documented the findings in the standardized VPAT format that procurement teams require. The assessment was granular: every WCAG success criterion received a conformance rating of Supports, Partially Supports, Does Not Support, or Not Applicable, with remarks explaining each finding.

This work represents the documentation layer of the practice. Evaluation without documentation is invisible to the procurement processes that increasingly gate purchasing decisions on accessibility conformance. VPATs translate evaluation findings into the formal language that buyers, legal teams, and compliance officers need.

What Fifteen Years Taught Me

The technical methodology - automated scanning, expert walkthrough, user testing with participants with disabilities - has been stable for a decade. It works. Each method catches what the others miss. I run all three every time.

What changed over fifteen years is my understanding of where the leverage is. Early in the practice, I thought the highest-value work was the evaluation itself: find the problems, report them clearly, hand over the recommendations. That's necessary work and I still do it. But it's not where lasting change comes from.

Lasting change comes from building the organizational capability to ship accessible products as a matter of course. That means training, standards, design system integration, documentation workflows, and leadership buy-in. It means making accessibility part of how teams work, not something that gets checked after they're done.

The Golden 1 longitudinal finding is the proof. Good intentions and a complete website redesign weren't enough to close the gap. What was missing wasn't technical knowledge. It was the organizational infrastructure to apply that knowledge consistently across every page, every component, every interaction, over the full lifecycle of a product.

That pattern - the gap between what an organization knows and what it consistently does - is the same problem I've worked on across every domain in my career. In accessibility, in research operations, in human-automation interaction. The unit of change isn't the deliverable. It's the capability.